As a growing tech hub, Austin has become a premier testing ground for autonomous vehicle technology. With Tesla’s Gigafactory Texas right in our backyard, Model 3s, Model Ys, and even Cybertrucks are a staple of MoPac and I-35.

However, as more drivers engage Autopilot and Full Self-Driving (FSD) features, the number of accidents linked to these systems has risen. If you or a loved one has been involved in a collision with a self-driving car, you likely have one pressing question: Who is liable when the car was supposedly “driving itself”?

At Joe Lopez Law, we understand the complex intersection of Texas personal injury law and emerging automotive technology. This guide breaks down the legal landscape of Tesla accidents in Austin and what you need to know about pursuing a claim.

What is Tesla Autopilot vs. Full Self-Driving?

It is a common misconception that Teslas are fully autonomous. In reality, Tesla currently offers two primary suites of “Advanced Driver Assistance Systems” (ADAS), both of which require an attentive human driver:

- Autopilot: This is a standard suite of features that includes Traffic-Aware Cruise Control and Autosteer (keeping the car in its lane). It is designed for use on controlled-access highways.

- Full Self-Driving (FSD): Despite the name, FSD is currently “Level 2” automation. It can navigate interchanges, change lanes, and recognize stop signs or traffic lights, but the driver must remain ready to take over at any second.

The Legal Distinction: Because these are considered “assistive” rather than “fully autonomous,” Tesla’s legal defense often hinges on the fact that the driver is responsible for the vehicle’s actions, regardless of which software is engaged.

Tesla Autopilot Accident Statistics: The National and Local Reality

While Tesla claims that miles driven with Autopilot are safer than human-driven miles, federal data paints a more privileged picture of the risks involved.

- National Oversight: The National Highway Traffic Safety Administration (NHTSA) has opened multiple investigations into Tesla’s Autopilot following hundreds of crashes, specifically those involving collisions with emergency vehicles parked on the side of the road.

- The Austin Impact: As of early 2026, Tesla’s “Robotaxi” pilot and widespread FSD testing in Austin have led to localized incidents. Recent reports indicate that in certain urban environments like downtown Austin, autonomous systems can struggle with the unpredictability of heavy pedestrian traffic and constant construction.

Common Autopilot Failure Scenarios

In our experience as Austin car accident lawyers, we see several recurring “edge cases” where Tesla’s technology frequently fails:

- Phantom Braking: The vehicle suddenly slams on the brakes at highway speeds because the sensors misidentify an overhead bridge or a shadow as a solid obstacle.

- Failure to Detect Obstacles: Specifically stationary objects, such as a stalled truck or a police car with flashing lights.

- Lane-Keeping Errors: The car may “hug” a lane line too closely or attempt to take an exit it wasn’t supposed to, leading to side-swipe accidents.

- Intersection Confusion: In FSD mode, the car may fail to yield the right of way or misinterpret a flashing yellow light.

- Construction Zone Issues: Faded lane markings or temporary orange cones on Austin’s ever-changing roads can confuse the vehicle’s cameras.

Who is Liable in an Autopilot Crash?

Determining liability in a Tesla crash is significantly more difficult than a standard “rear-end” accident. It often involves a mixed liability scenario.

1. Driver Responsibility

Under Texas Transportation Code § 545.454, a human operator is generally required to remain attentive. If the driver was distracted—playing a game, texting, or even sleeping—they will likely bear the primary share of liability.

2. Product Liability Claims (Suing Tesla)

If the driver was using the system exactly as intended and the software made a critical error, you may have a claim against Tesla under the Texas Products Liability Act (Chapter 82). This usually falls into three categories:

- Design Defects: The software’s algorithm is fundamentally unsafe.

- Marketing Defects: Tesla’s marketing leads drivers to believe the car is more capable than it is, causing them to lower their guard.

- Software Defects: A specific bug in a software update caused the system to fail.

3. Mixed Liability

Texas follows modified comparative negligence. This means a jury could find the Tesla driver 60% at fault for not paying attention and Tesla 40% at fault for a design flaw that allowed the accident to happen.

Texas Laws on Autonomous Vehicles

Texas is a leader in autonomous vehicle (AV) legislation. Senate Bill 2205 established that AVs can operate on Texas roads without a human driver if they meet certain insurance and safety requirements.

However, for most Teslas on the road today, the law still treats the human in the driver’s seat as the “operator.” This means you must still follow the “Duty of Care”—you are responsible for avoiding hazards, even if your car’s AI doesn’t see them.

Insurance Complications: Who Pays?

Standard Auto Insurance: Most Texas insurance policies will cover an Autopilot crash like any other accident, provided the driver wasn’t committing a crime. However, if the insurer believes the manufacturer is at fault, they may seek subrogation—suing Tesla to recover the money they paid out.

Tesla Insurance: Tesla offers its own insurance product in Texas, which uses “Real-Time Driving Behavior” to set premiums. This adds a layer of complexity, as Tesla essentially acts as both the manufacturer and the insurer, creating a potential conflict of interest during a claim.

Proving the Case: Tesla’s Data Logs

The most critical evidence in a Tesla accident is the Event Data Recorder (EDR) and the Data Logs.

- What it tracks: Steering input, brake application, and whether Autopilot was engaged.

- The Challenge: Tesla is protective of its data. While owners can request their data through Tesla’s privacy portal, an attorney often needs to issue a subpoena or a “preservation letter” immediately to ensure that video footage from the car’s eight cameras isn’t overwritten.

Why You Need a Specialized Austin Tesla Accident Lawyer

You aren’t just fighting a negligent driver; you are potentially fighting one of the largest tech companies in the world. These cases require:

- Accident Reconstruction: Experts who can interpret EDR data and sensor logs.

- Software Forensics: Understanding which version of FSD was running and its known bugs.

- Navigating Precedents: Following landmark cases where juries have begun to hold Tesla partially liable for Autopilot malfunctions.

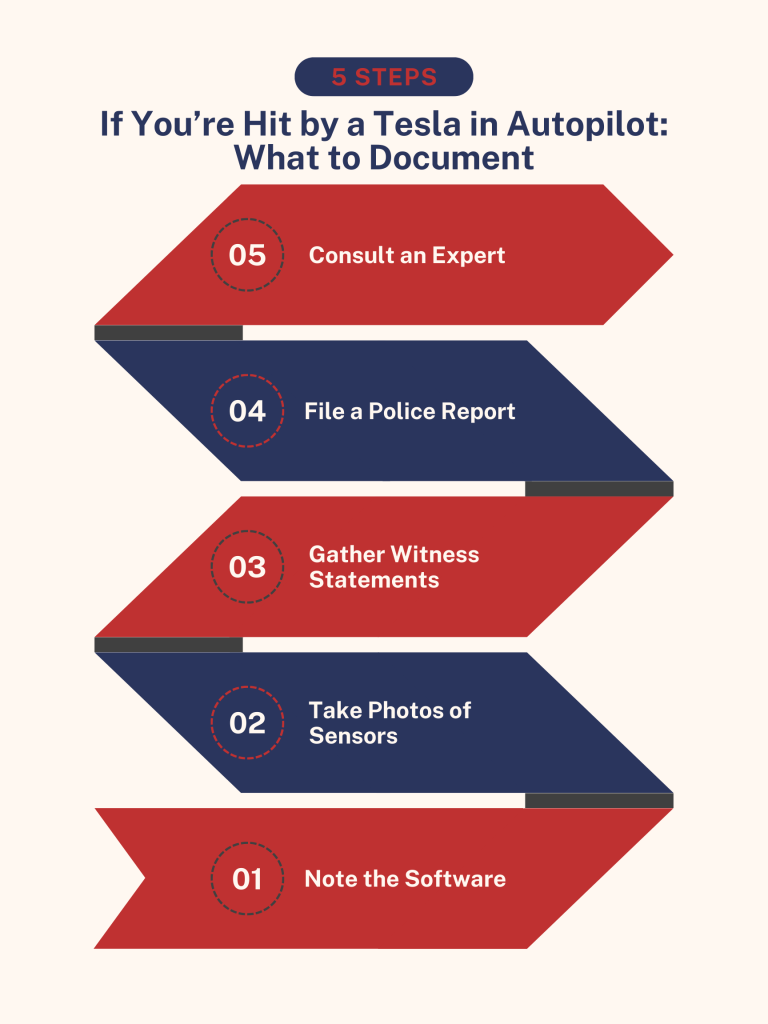

If You’re Hit by a Tesla in Autopilot: What to Document

- Note the Software: If possible, ask the driver or look at the dash to see if Autopilot/FSD was active.

- Photos of Sensors: Take photos of the Tesla’s cameras (windshield, pillars, and fenders) to see if they were obscured by dirt or debris.

- Witness Statements: Find people who saw the car’s behavior (e.g., “The car didn’t even slow down”).

- Police Report: Ensure the officer notes that the driver claimed the car was in “self-driving mode.”

- Consult an Expert: Contact Joe Lopez Law to ensure a specialized investigator can preserve the vehicle’s electronic data before it is overwritten.

Reach Out Today for a Free Tesla Accident Case Evaluation

Accidents involving autonomous technology are the new frontier of personal injury law in Texas. If you’ve been injured in an Austin Tesla accident, don’t navigate the insurance and legal maze alone.

Contact Joe Lopez today. We have the experience and resources to hold negligent drivers and multi-billion-dollar manufacturers accountable. Call us at (512) 580-9962 or fill out our online form for a free consultation.